I’m a passionate writer who loves exploring ideas, sharing stories, and connecting with readers through meaningful content.I’m dedicated to sharing insights and stories that make readers think, feel, and discover something new.

The Explainability Illusion: An Overview

The financial world has always been shrouded in complexity, with intricate systems and algorithms driving decisions that impact economies globally. However, a new phenomenon has emerged, termed the 'Explainability Illusion.' This concept raises critical questions about the transparency and understanding of these systems, particularly in the context of Wall Street. As algorithms increasingly dictate trading strategies and financial decisions, the illusion of explainability can lead to dangerous misconceptions.

Understanding the Explainability Illusion

The Explainability Illusion refers to the false sense of understanding that stakeholders—ranging from investors to regulators—believe they have regarding the decision-making processes of complex algorithms. While these systems may produce results that seem logical and justifiable, the underlying mechanisms can often be opaque and difficult to interpret.

In the realm of finance, this illusion can create significant risks. Investors may rely on algorithmic trading systems without fully grasping their operational intricacies. This reliance can lead to catastrophic outcomes, especially during market volatility when algorithms may behave unpredictably.

The Role of AI in Financial Decision-Making

Artificial Intelligence (AI) has revolutionized the financial sector, enabling faster and more efficient decision-making. However, the complexity of AI models often obscures their inner workings. For instance, deep learning models can analyze vast datasets and identify patterns, but the rationale behind their predictions can be elusive.

This lack of transparency can foster a dangerous reliance on AI, as stakeholders may assume that these systems are infallible. In reality, they can perpetuate biases and make decisions based on flawed data. The illusion of explainability can lead to overconfidence in these systems, resulting in significant financial losses.

The Dangers of Overconfidence

Overconfidence in algorithmic trading systems can have dire consequences. During the 2020 market crash, many investors faced substantial losses as algorithms executed trades based on pre-set parameters without considering the broader market context. This highlights the need for a more nuanced understanding of how these systems operate.

Moreover, the Explainability Illusion can hinder regulatory efforts. If regulators believe they understand the algorithms governing financial markets, they may fail to implement necessary safeguards. This can create a precarious environment where systemic risks go unaddressed.

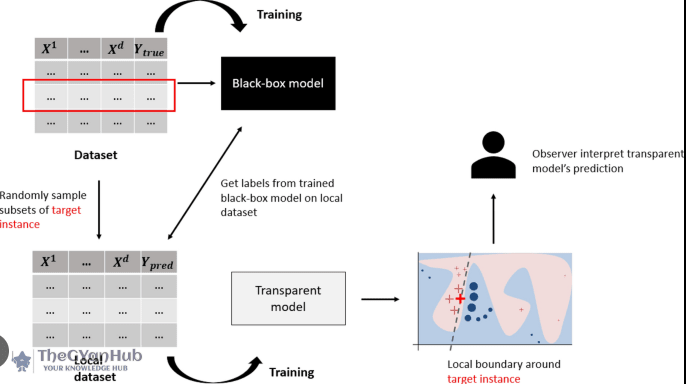

Addressing the Explainability Challenge

To mitigate the risks associated with the Explainability Illusion, stakeholders must prioritize transparency in algorithmic systems. This involves developing frameworks that allow for better understanding and interpretation of AI-driven decisions. Financial institutions should invest in explainable AI technologies that provide insights into how algorithms arrive at their conclusions.

Implementing robust testing and validation processes for algorithms.

Encouraging collaboration between data scientists and financial experts to bridge the knowledge gap.

Enhancing regulatory frameworks to ensure accountability in algorithmic trading.

Conclusion

The Explainability Illusion represents a significant challenge for Wall Street and the broader financial sector. As algorithms continue to shape the future of finance, it is crucial for stakeholders to recognize the limitations of their understanding. By fostering transparency and accountability, the industry can navigate the complexities of algorithmic trading while safeguarding against the inherent risks.

Further Reading

Related articles in this category

National Technology Day: India Is Buying An AI Course Every 3 Minutes. Is It Really Paying Off?

May 11, 2026

On National Technology Day, a striking trend emerges as India purchases an AI course every three minutes. This article explores whether this investment in AI education is truly yielding benefits.

ChatGPT Palm Reader Meme: Unpacking the Fear of CIA Fingerprints

April 28, 2026

The viral ChatGPT palm reader meme has ignited concerns about privacy, with many fearing that the CIA may have access to their fingerprints. This article explores the origins of the meme and the underlying anxieties it reflects.

OpenAI's Revolutionary AI Smartphone: A Game Changer for Apple and Samsung

April 27, 2026

OpenAI is venturing into the smartphone market with an innovative AI device that boasts agentic capabilities, posing a significant challenge to industry giants like Apple and Samsung. This article explores the potential impact of this groundbreaking technology.